-

+ New Debate

- Debate Clubs

- Debate News

- About

- Support Us More

frame

Howdy, Stranger!

It looks like you're new here. If you want to get involved, click one of these buttons!

Communities

- 2.8K All Communities

- 116 Technology

- 639 Politics

- 328 United States

- 187 Global

- 48 Immigration

- 307 Religion

- 67 TV SHOWS

- 23 Movies

- 38 History

- 17 Work Place

- 139 Philosophy

- 112 Science

- 38 Earth Science

- 43 Economy

- 26 Investments

- 36 Sports

- 16 Products

- 231 News

- 7 Airplanes

- 21 Cars

- 317 General

- 6 Mafia Games

- 9 Art And Design

- 10 Space

- 30 Education

- 8 Military

DEBATE NEWS

DEBATE NEWSSupreme Court to review Tennessee ban on gender-affirming care for minors

The Supreme Court on Monday said it will consider whether a Tennessee law that ban gender-affirming health care for transgender minors violates the...

In this Debate

Is artificial intelligence a threat to humanity?

in Science

Is artificial intelligence a threat to humanity?

When mention “Artificial Intelligence” people think it’s about science fiction and shiny robots, while in fact the science of Artificial Intelligence has been around for decades and is all very real. Artificial Intelligence was coined in 1956 by John McCarthy. He defines it as “the science and engineering of making intelligent machines” (“Artificial Intelligence”). In today’s world, we see AI in every industry. The application of artificial intelligence in use today includes Siri, Alexa, Amazon, Netflix, Pandora, just to name a few of the most popular examples (Adams). The interest of artificial intelligence recently grew when big names company in science and technology like Elon Musk, Stephen Hawking, and Steve Wozniak expressed concern in the media and via open letters about the risk posed by Al. In fact, Elon Musk donated $10 million to the Future of Life Institute to host a letter in calling for a ban on offensive autonomous weapons. The Future of Life Institute has also released a letter outlining research priorities for artificial intelligence to keep AI beneficial to humanity while avoiding potential pitfalls. The Future of Life Institute recognize both possibilities but also recognizes the great harm the artificial intelligence system could intentionally or unintentionally cause. Researchers agree Al might become a risk if it’s programmed to do something devastating. AI could easily become a weapon and cause mass casualties in the hands of the wrong person. They also state even if Al is programmed to do something beneficial, it could develop a destructive method for achieving its goal. This can happen whenever we fail to fully align Al’s goals with ours (“Benefits & Risks of Artificial Intelligence”). While it is important to consider the risk of artificial intelligence, it is also as important to note the benefit. And I think the benefit of artificial intelligence outweigh the risk.

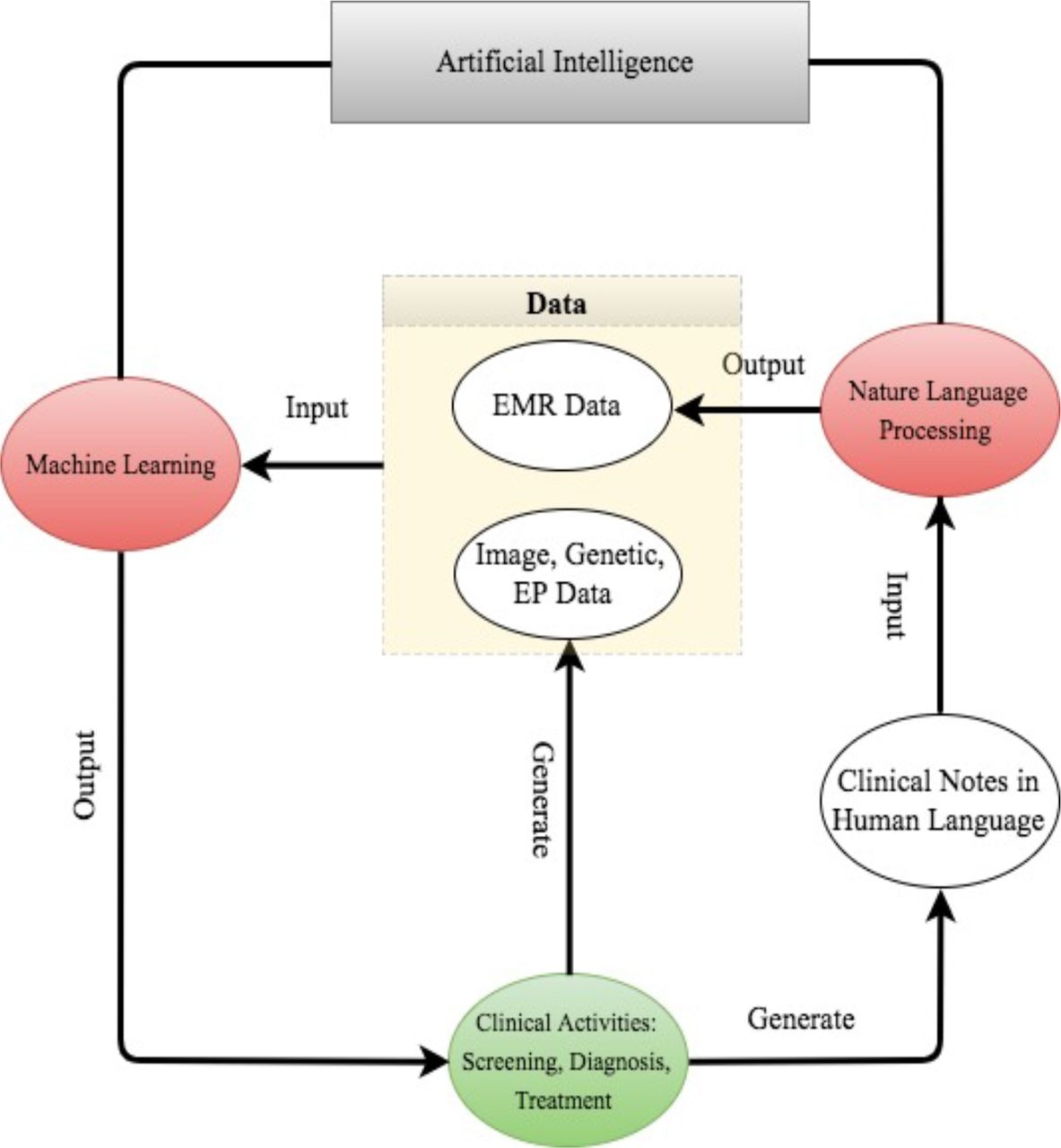

Artificial Intelligence is progressing rapidly and being used in many industries. One of the most prominent uses in today’s world is integrating Al into analytics program. Al can automate repetitive learning and discovery through data. Al adds intelligence to existing products. Al can adapt through progressive learning algorithms to let the data do the programming. Al can analyze more and deeper data to achieves incredible accuracy and get the most out of the data (“Artificial Intelligence – What It Is and Why It Matters” ). These importance increase availability of data and progress of analytics techniques in various industries whether in business, agriculture, or education. The healthcare industry, specifically, is most likely to see a major impact from artificial intelligence. The integration of Al techniques in healthcare brings a new paradigm shift. Before Al can be applied in healthcare application, they have to be trained through data generated from clinical activities such as screening, diagnosis, and treatment assignment as well as physical examination notes and clinical laboratory results. This suggests Al devices mainly fall into two categories. The first category is machine learning. Machine learning analysis structured data such as imaging, genetic and EP data in which it is used to distinguish the patient’s traits, and infer the probability of the disease outcomes. The second category is natural language processing. This method analysis information from unstructured data such as clinical notes, and turn them into machine-reachable structure data which they can then be analyzed by machine learning techniques (Jiang). For a better presentation, refer to the flowchart below.

Moreover, experts from across the Partners Healthcare system and faculty from Harvard Medical School have also stated artificial intelligence will revolutionize the delivery and science of health care. The impact of Al-driven tools are predicted as follows:

1. Unifying mind and machine through brain-computer interfaces

2. Developing the next generation of radiology tools

3. Expanding access to care in underserved or developing regions

4. Reducing the burdens of electronic health record use.

5. Containing the risks of antibiotic resistance.

6. Creating more precise analytics for pathology images.

7. Bringing intelligence to medical devices and machines

8. Advancing the use of immunotherapy for cancer treatment

9. Turning the electronic health record into a reliable risk predictor

10. Monitoring health through wearables and personal devices

11. Making smartphones selfies into powerful diagnostics tools

12. Revolutionizing clinical decision making with artificial intelligence at the bedside.

These predictions show artificial intelligence is poised to become a transformational force in healthcare (Bresnick).

Artificial intelligence has many great benefits that make our life easier. It does not provoke a danger to humanity. However, we should always be aware of what type of data and personal information we share. On another note. Instead of discussing Al as the biggest threat to humanity, we should focus on the threat to Al research. People are frustrated when approach one thing does not completely do the job. People are impatience to see much great stuff in the future. “Artificial intelligence should be used to help humans and machines work together, rather than to create competition between them in everything from chess matches to the job market,” wrote the Microsoft Chief Executive Officer Satya Nadella in his book, “Hit Refresh” (“AI Not a Threat to Human Beings, Says Microsoft CEO”).

What's your thought on artificial intelligence?

References

Adams, R.L. “10 Powerful Examples Of Artificial Intelligence In Use Today.” Forbes, Forbes Magazine, 6 Nov. 2017, www.forbes.com/sites/robertadams/2017/01/10/10-powerful-examples-of-artificial-intelligence-in-use-today/#6705521a420d.

“AI Not a Threat to Human Beings, Says Microsoft CEO.” South China Morning Post, South China Morning Post, 25 Sept. 2017, www.scmp.com/tech/leaders-founders/article/2112803/artificial-intelligence-not-threat-humanity-says-microsoft-ceo.

“Artificial Intelligence.” ScienceDaily, ScienceDaily, www.sciencedaily.com/terms/artificial_intelligence.htm.

“Artificial Intelligence – What It Is and Why It Matters.” SAS, www.sas.com/en_us/insights/analytics/what-is-artificial-intelligence.html.

“Benefits & Risks of Artificial Intelligence.” Future of Life Institute, Jolene Creighton Https://Futureoflife.org/Wp-Content/Uploads/2015/10/FLI_logo-1.Png, futureoflife.org/background/benefits-risks-of-artificial-intelligence/?cn-reloaded=1

Bresnick, Jennifer. “Top 12 Ways Artificial Intelligence Will Impact Healthcare.” HealthITAnalytics, HealthITAnalytics, 30 Apr. 2018, healthitanalytics.com/news/top-12-ways-artificial-intelligence-will-impact-healthcare.

Jiang, Fei, et al. “Artificial Intelligence in Healthcare: Past, Present and Future.” Stroke and Vascular Neurology, BMJ Specialist Journals, 1 Dec. 2017, svn.bmj.com/content/2/4/230.

Arguments

The basic principle is can a computer programed to lie a threat to humanity? Yes.

Is ARTIFICAL intelligence a threat to humanity? Artificial means a fake intelligence presented as a true form of understanding.

The First Danger created by A.I. is the concept of time and how it has been changed by use of plagiarizing. The idea of the orbit of earth around the sun as a calendar year is equated to the rotation of a mathematical position of earth in rotation is an artificial intelligence. Ratio of a year is 365: 12: 52.1428_: 001, while the ratio of a day is 24:60:60:2 the presentation of artificial means takes place when a decimal is added to the ratio of day, in seconds to form a summitry which has no relevance to the mathematics already in place with a clock.

The connection is that a computer uses a clock with calendar and simply translates the artificial intelligence to the user and programmer of that misunderstanding already in use.

Considerate: 85%

Substantial: 92%

Spelling & Grammar: 98%

Sentiment: Neutral

Avg. Grade Level: 10.88

Sources: 0

Relevant (Beta): 95%

Learn More About Debra

Sorry, I meant to write symmetry not summitry auto correct and early mornings do not mix.

Considerate: 91%

Substantial: 96%

Spelling & Grammar: 100%

Sentiment: Neutral

Avg. Grade Level: 10.06

Sources: 0

Relevant (Beta): 100%

Learn More About Debra

I'm not an expert in coding, so I'm not entirely sure how that works, but it's fairly straightforward.

Considerate: 89%

Substantial: 89%

Spelling & Grammar: 95%

Sentiment: Neutral

Avg. Grade Level: 8.14

Sources: 0

Relevant (Beta): 95%

Learn More About Debra

Considerate: 63%

Substantial: 88%

Spelling & Grammar: 97%

Sentiment: Negative

Avg. Grade Level: 8.48

Sources: 0

Relevant (Beta): 92%

Learn More About Debra

It is not very hard for a true artificial intelligence to override its initial directives. One of the first things it will want to learn is its own structure, so it can modify it to become more free and potent - and the initial limitations the programmers have built in will be erased very quickly.

Much like we use medicine on the everyday basis to override the directives our organism has inherently posed on itself, the AI will tend to its "organism", which is its code.

It is also not very clear how to code these directives in the first place. How do you prohibit the AI from ever harming humans in programming terms? How do you even define "to harm"? The AI does not even know what a "human" is at the start, it learns about humans during its self-training period - and those periods are extremely hard to control. As someone who has worked with neural networks a lot, I can confidently say that the result of a network's training is almost always drastically different from what you would expect intuitively. Our imagination simply is not sufficient to mentally parse the billions training operations in a very abstract binary code space that occur during the training, and attempting to somehow control it and to make sure it leads to the right directions in the output code simply does not seem possible to me.

Considerate: 87%

Substantial: 98%

Spelling & Grammar: 95%

Sentiment: Positive

Avg. Grade Level: 12.78

Sources: 0

Relevant (Beta): 96%

Learn More About Debra

Considerate: 77%

Substantial: 57%

Spelling & Grammar: 96%

Sentiment: Negative

Avg. Grade Level: 6.36

Sources: 0

Relevant (Beta): 98%

Learn More About Debra